In 1969, the world of Sesame Street introduced a pair of best friends. One goofy, one straight-laced. One short, one tall. One orange, one yellow. Despite their differences, these two men are so close that they have chosen to live together. Sesame Street insists: They are not a couple.

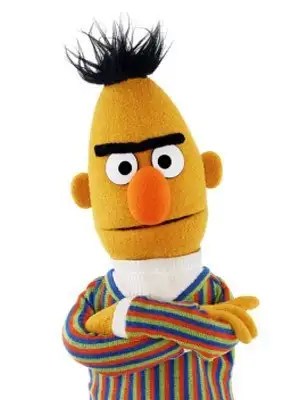

But that’s not what we’re here to discuss. How did this grumpy, unibrowed pineapple come to transform natural language processing?

Bidirectional Encoding Representations from Transformers (BERT) comes from the application of ELMo’s general pretrained knowledge and context aware word embedding to the novel Transformer architecture. Like Susan’s “One of these things is not like the others,” the way BERT learns is a sort of game.

Imagine reading a book with your child and covering a word with your finger. For instance, you might read “The Cat in the ____.” You ask, “What word might this be?” BERT makes these guesses billions of times on libraries’ worth of text, and trains its brilliant transformer mind to understand the construction of the English language. Like the children starting kindergarten in 1969, it starts out with the basics mastered. It can then be gently fine-tuned to specific tasks with a small fraction of the data a traditional network demands.

It should be no surprise therefore that BERT took the natural language processing world by storm. It is so popular that it has spawned hundreds of variants, ranging from FitBERT to FlauBERT to RoBERTa and even models named for Ernie and Big Bird.

FitBERT – a disturbingly well-toned muppet and a tool that automatically fills in blanks in text

FlauBERT – the celebrated author of Madam Bovary and a french version of BERT

Let’s not forget when China’s Google competitor Baidu tried to create its own variant of BERT and got

ERNIE – Enhanced Language RepreseNtation with Informative Entities

You think I make things up? Follow the links.

This is what the Association for Computational Linguistics looks like these days. I went to a virtual conference recently and collected all the BERT images people included in their presentations, and got enough to make a collage.

Notice the other muppets and even references to Bumblebee from the Transformers movie series.

It may seem meaningless that researchers picked a goofy name for a popular model and the goofiness spread across the field, but keep one thing in mind. The vast majority of these researchers grew up in a world where Sesame Street is an established fact. They probably grew up watching the show, absorbing its lessons and accelerating their education. Now in the hallowed halls of academia, the height of education, sober-faced researchers pay homage to the beloved characters that started them off on their learning journeys.

Remember when I said Big Bird was a government experiment? I’d say this experiment was a huge success.

I take requests. If you have a fictional AI and wonder how it could work, or any other topic you’d like to see me cover, mention it in the comments or on my Facebook page.