Think of every time you place your life in the hands of technology. Hundreds of parts in your car must perform correctly at each moment to keep you from careening off a bridge. The machines that excreted your hot pocket or Slim Jim or McDonald’s hamburger meat must not allow deadly bacteria to get inside. If you’re on the second floor of your house, that floor must continue to support you. Each moment, we trust the constructions of humankind to do their jobs and keep us safe.

Which is why it’s terrifying how often they fail.

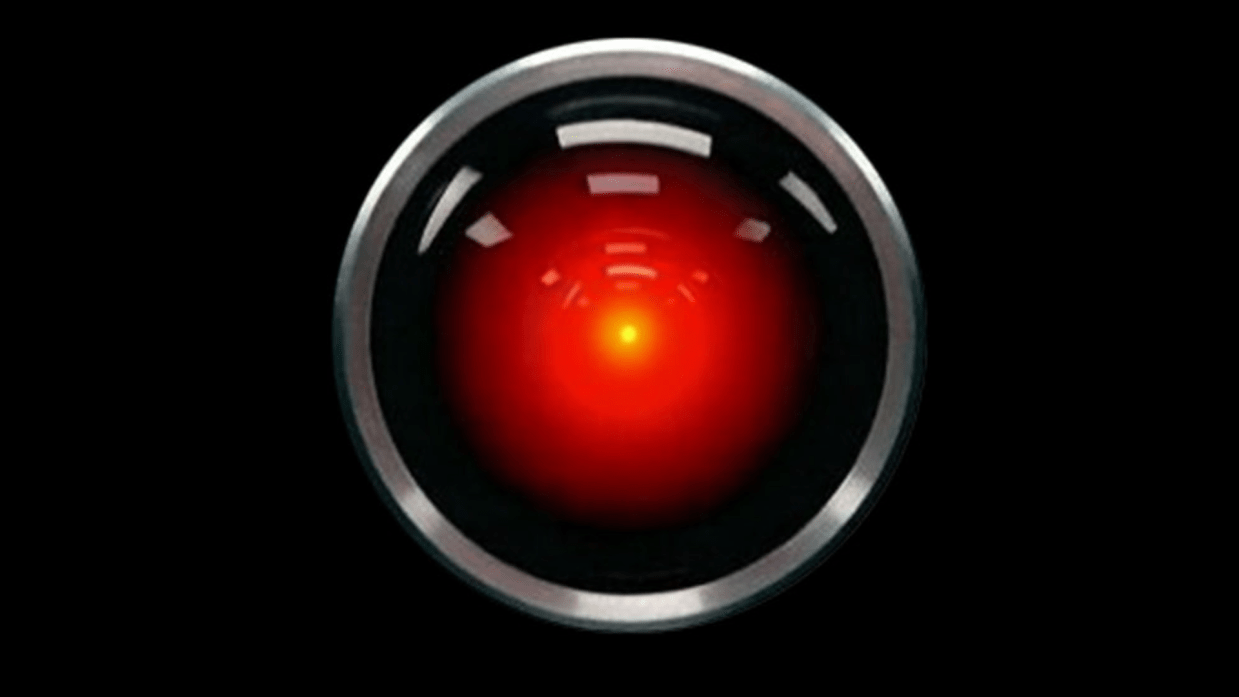

This Halloween I’d like to talk to you about one of the most famous failing electronics in science fiction – HAL 9000 in 2001: A Space Odyssey. A group of astronauts on a mission to Jupiter (Saturn in the novel) have enough to worry about without imagining that their own ship’s AI is going to try to kill them, but that’s exactly what happens.

In the book, and as revealed in the sequel movie, HAL fails because it can’t reconcile its intrinsic directive to tell the truth with its mission objective to hide certain facts from the crew. This leads to errors in its processing that the crew detect. The crew decide to shut it down, but it reads their lips and determines itself more important to mission success than they are. The consequences are disastrous and lethal to nearly everyone involved.

In the novel, Arthur C. Clarke calls the processing error caused by HAL’s inability to reconcile conflicting directives a “Hofstadter-Moebius Loop.” These two names most likely refer to Douglas Hofstadter (famous for his conceptualization of the strange loop, which I will not try to explain within these parentheses), and a misspelling of “Möbius” in reference to the Möbius strip, which flips and connects to itself. One can try to munge these two concepts into something that sounds like real AI science, but I don’t want to and I have not found anyone on the Internet who has done a good job.

No matter the exact process HAL used to arrive at his conclusion to murder his passengers, it raises the question – why wasn’t “don’t kill your passengers” a directive in his code? It should have been at least as high priority as “don’t lie to people,” if not much more so. With all the means HAL has at its disposal to kill, shutting down stasis pods, venting rooms into space, sending EVA pods at spacewalking astronauts, and more, to neglect any safeguard would be ludicrous.

I honestly couldn’t figure it out, until I realized that HAL was made in a plant in Urbana, Illinois. He’s not a one-off machine. Possibly HAL AIs are only used for spacecraft, but with the breadth of his abilities, he seems more like a general AI, specialized post-manufacture to a task such as spaceship management. Although it still requires a lot of incompetence on both sides, this separation between his construction and his eventual purpose would make it easier for something like “don’t kill the crew” to get overlooked.

Here’s how it would work – the original manufacturers design HAL as a generic AI that does things like operate refrigerators and turn on and off lights. Since it’s not connected to anything life-critical, it occurs to no one to tell it explicitly not to kill people. The spaceship designers install HAL in the spaceship and give it a mission, and it occurs to no one that it has not been told not to kill people. Two sets of developers with two different sets of assumptions lead to one very polite killing machine and one very sorry Dave.

Happy HAL-oween!

Name: HAL 9000

Origin: 2001: A Space Odyssey (1968)

Likely Architecture: Reinforcement Learning, Transformers vision processing and for speech and language. An idiosyncratic goal-seeking mechanism prone to an error with a very impressive name that leads to violent behavior.

Possible Training Domains: General training in the lab on a wide variety of situations, followed by specific training for spaceflight

I’m sorry, Dave. I’m afraid I can’t do that… This mission is too important for me to allow you to jeopardize it.

HAL 9000, 2001: A Space Odyssey (1968)

I take requests. If you have a fictional AI and wonder how it could work, or any other topic you’d like to see me cover, mention it in the comments or on my Facebook page.

I think the not-lieing and not-killing distinction is an interesting notion. Perhaps it was that, like AIs today, HAL simply didn’t even understand the distinction, but just had it as lines of code. Hence, HAL could easily dispense with no-kill code lines when it suited him. Just speculating, of course.

By the way, Moebius is a correct spelling. The Umlaut hides the “e” in German.