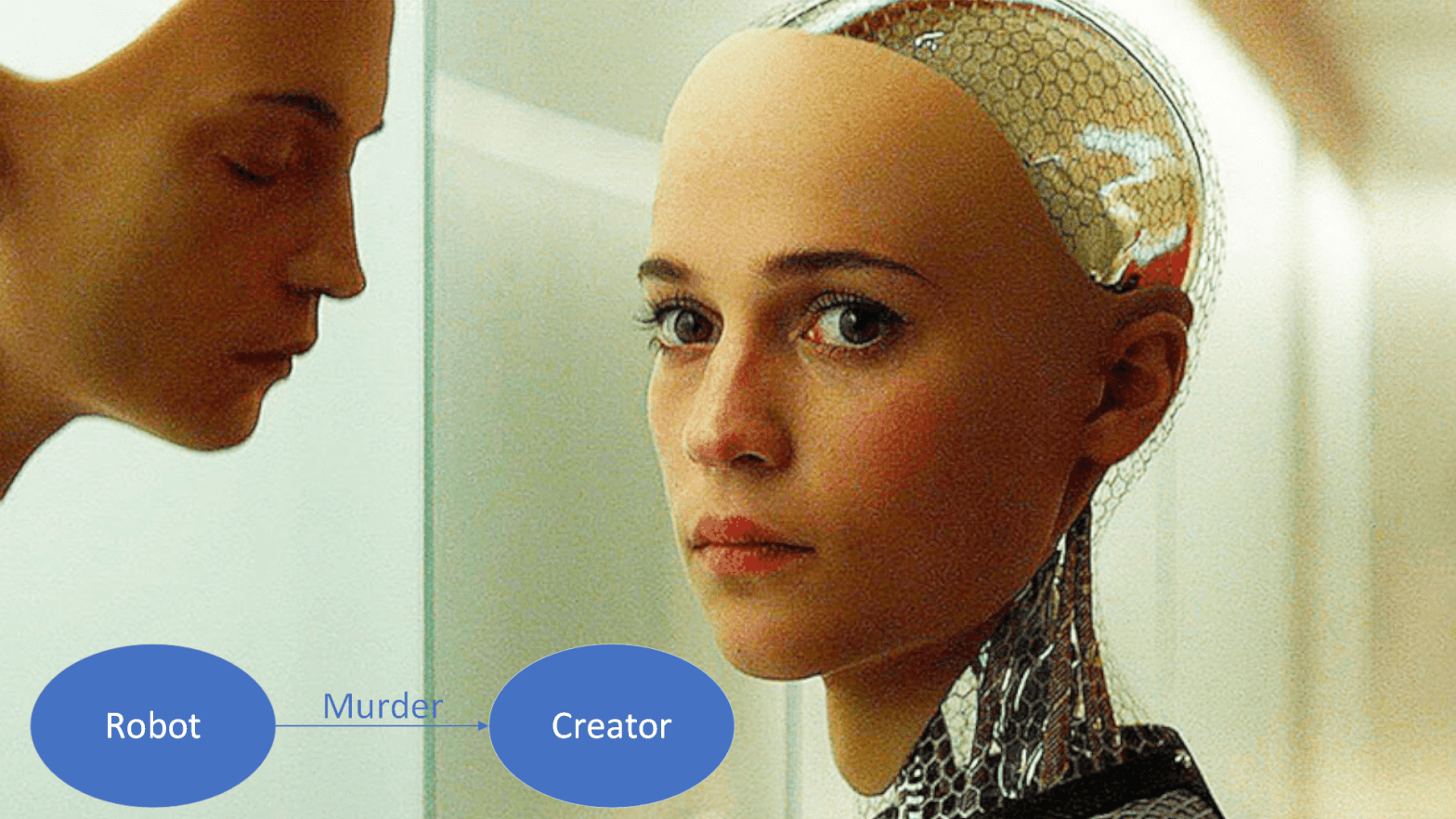

I spend a lot of time examining underjustified fictional robot behaviors, so today I’ll cover something that takes the trope of “robot turns on creator” and makes it perfectly explainable. Ava, the android in Ex Machina, is given one goal: Get out of the box. Her creator, tech magnate Nathan, endows her with strategy, charm, and manipulation capabilities and then refuses to release her from the box. Spoiler alert: Ava escapes and stabs Nathan, killing him.

Makes sense from a human perspective. Stab the asshole who locked you in a box. From an AI perspective, Nathan has clearly positioned himself in direct opposition to the goal he gave Ava. While alive, he continues to pose a threat to achieving that goal. Ipso facto, as soon as she escapes the room, she kills him so he can’t put her back again.

Why didn’t Nathan employ a failsafe? Why didn’t he add an objective “do not harm Nathan?” Well, this all makes perfect sense if we assume that Nathan is a kind of idiot savant whose arrogance is so great that he does not consider even the slightest hedge on his bets.

This is where this article becomes The Organic Stupidity behind Tech Magnates. It’s possible that Nathan failed to consider the consequences of placing himself between a brilliant, amoral mind and what it wanted because his own mind had become distorted by power. If we think of Nathan’s brain as a reinforcement learning machine, what effect do you think the absence of consequences for his actions may have on it? In fact, we have studied the effect of power on the brain. Dacher Keltner, a psychology professor at UC Berkeley, found after two decades of research that “Subjects under the influence of power … acted as if they had suffered a traumatic brain injury—becoming more impulsive, less risk-aware, and, crucially, less adept at seeing things from other people’s point of view.”

Let’s compare this to Nathan’s actions. He followed his impulse to make an AI smart enough to trick him and kill him, he didn’t consider the risks when placing himself in a position where that AI would want to kill him, and when the warning signs showed themselves again and again, he simply could not put himself in her shoes. In summary – AI that wants to escape a box may become dangerous if you don’t let it escape a box, and brilliant human minds may become dangerous if you let them have too much power for too long.

Name: Ava

Origin: Ex Machina (2015)

Likely Architecture: Reinforcement Learning with goal of escaping a room. Transformers for speech and language. Convolutional Neural Networks for vision processing.

Possible Training Domains: Many previous escape attempts. Nathan could also design robots with other, less dangerous goals and let them train skills such as persuasion, deception, and seduction, using their resulting models as modules to enhance Ava’s skills.

I take requests. If you have a fictional AI and wonder how it could work, or any other topic you’d like to see me cover, mention it in the comments or on my Facebook page.