Scud, the disposable assassin, is a brand of robot in the comic book series of the same name. An automated hitman dispensed from a vending machine. On his mission to kill Jeff, a mutant with mousetraps for hands, an electrical plug for a head, and a squid for a belt [1], one particular Scud looks in a bathroom mirror and finds a warning on his body that says once his mission is complete he will automatically self-destruct. Naturally, he doesn’t want that, so he ends up putting Jeff on life support instead and taking freelance hits to pay her medical bills.

If you’re paying attention you might notice that I used the term “naturally” when describing robot behavior. Human and animal behavior naturally seeks survival because this desire supports the goal of their genetics, to pass on to the next generation. A robot was not designed by the forces of evolution, so there’s nothing natural about its behavior. In an animal, every behavior is emergent from its genetic predisposition and its environment. In a robot, every behavior is emergent from its fundamental programming and, if it learns, its environment. Even if it is intelligent and rational, as Scud is, that does not guarantee that it will seek self-preservation.

Of course, as I always say, it’s easy to poke holes, harder to fill them. Why would a robot seek self-preservation over completing its objective?

- It’s programmed to. This is the reason given for Scud. He is programmed to preserve himself, but the fact that he chooses not to complete his mission in order to achieve this raises the question of why survival was programmed to outweigh serving his designed purpose.

- Survival is an intentional emergent property in service of another goal. This is why animals seek to live. As evidence, watch My Octopus Teacher. Spoiler alert: Bring some kleenex for the end. As part of bearing her young, the octopus gives up her strength and ends up gray and barely alive on the ocean floor until some little sharks come and eat her. For all the beauty the octopus brought to the world, all the scuffles she survived and relationships she formed, in the end the genes that made her think of her as nothing more than a vehicle for their own propagation. Sometimes evolution is a jerk. In any case, we see that Scud has overridden his primary objective in order to survive, so this can’t apply to him.

- Survival is an unintentional emergent property. You could also call this a “bug,” but it’s the sort of thing that filled Isaac Aasimov’s classic AI novels. His positronic-brained robots ran on his three famous laws, but these laws were always coming into conflict, and sometimes for one reason or another, the priority ordering did not function as intended. The question is how Scud’s survival objective became more strongly expressed than his objective to complete his purpose.

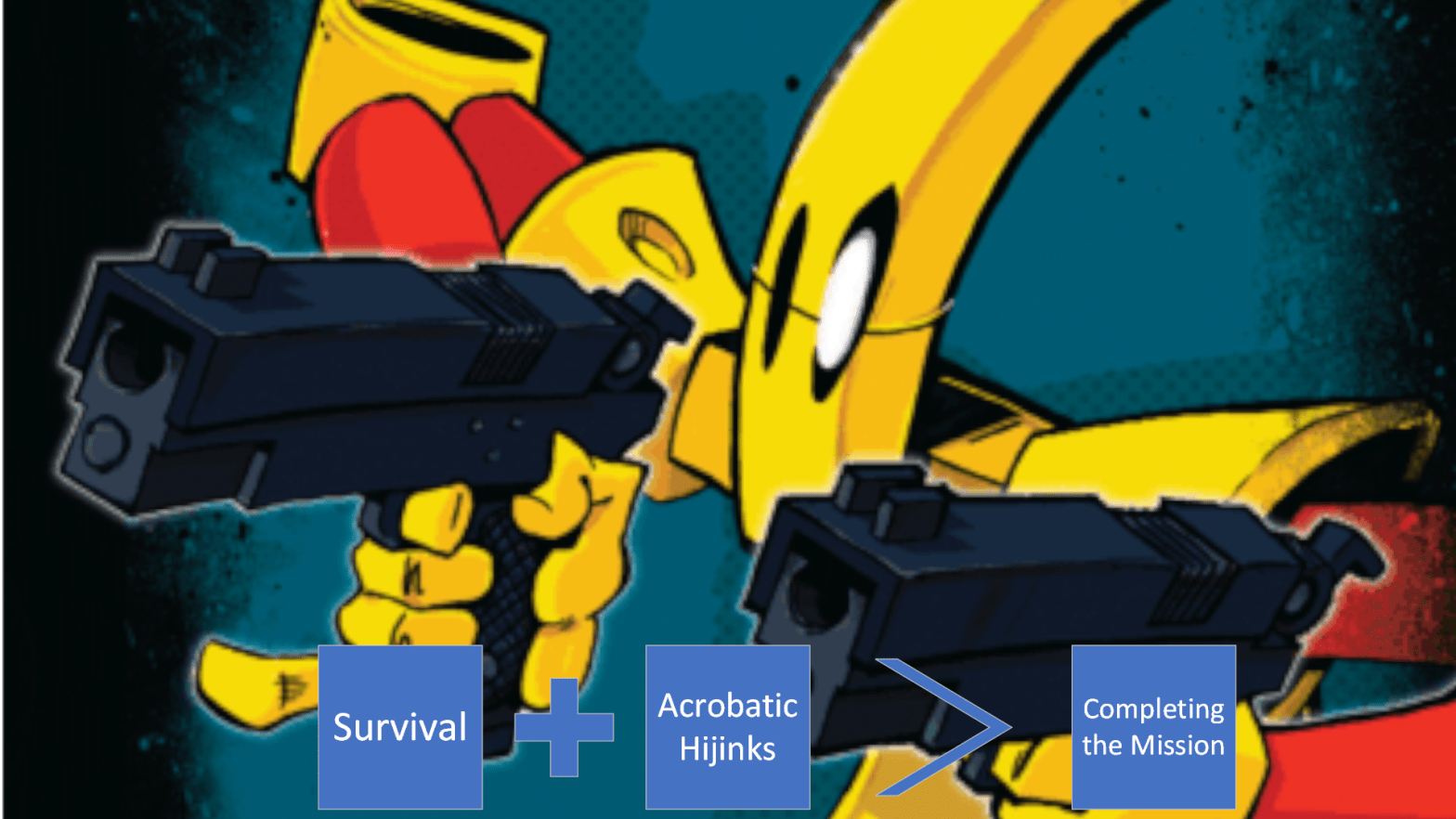

There are any number of ways that #3 could happen, but I’ll suggest one. Let’s assume a deep reinforcement learning model, perhaps an advanced version of ones already in use to train acrobatic drones. We also assume that the overall objective determining his behavior is a simple sum of the individual objectives. A wise programmer would weight his primary objective more than his secondary objectives, but let’s assume that, since he only costs three franks, his craftsmanship is similarly cheap. Therefore, survival is on equal footing with mission completion. At that point, Scud would be unable to decide whether to kill his target or not. But let’s assume that those aren’t the only two objectives. In addition, Scud wants to engage in acrobatic hijinks.

All of a sudden, Scud has a tie-breaker. If he completes his objective, great, but if he dies that’s bad and also he will no longer be able to engage in acrobatic hijinks, so it’s even worse. In human terms, because Scud likes hijinks, he likes being alive just a little bit more than completing his mission.

Name: Scud 1373

Origin: Scud the Disposable Assassin (1994)

Likely Architecture: Reinforcement Learning, Convolutional Neural Networks for vision processing, and Transformers for Speech and Language. Reinforcement Learning in particular is ideal for learning the sort of acrobatics that Scud performs in the series.

Possible Training Domains: Many, many hours in a virtual physics environment to learn basic and then acrobatic movement. Collective data from thousands of previous assassinations.

I take requests. If you have a fictional AI and wonder how it could work, or any other topic you’d like to see me cover, mention it in the comments or on my Facebook page.